2020年12月30日

Jerry

11125

2021年1月17日

网络请求不可避免会遇上请求超时的情况,在 requests 中,如何设置请求超时时间又如何设置重试呢?

直接上代码

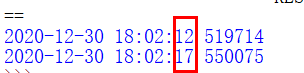

1、设置请求超时时间5s timeout=5 来指定

def get_url():

Headers = {

'content-type': 'application/json', 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64)AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.108 Safari/537.36'

}

url = "https://www.google.com.hk"

print(datetime.datetime.now())

try:

res = requests.get(url=url, headers=Headers, timeout=5)

except Exception as e:

pass

print(datetime.datetime.now())

五秒超时

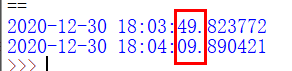

2、设置超时重试,如下

def get_url1():

Headers = {

'content-type': 'application/json', 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64)AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.108 Safari/537.36'

}

url = "https://www.google.com.hk"

print(datetime.datetime.now())

s = requests.Session()

s.mount('http://', HTTPAdapter(max_retries=3))

s.mount('https://', HTTPAdapter(max_retries=3))

try:

res = s.head(url=url, headers=Headers, timeout=5)

except Exception as e:

pass

print(datetime.datetime.now())

超时重试三次,加上第一次应该是一共四次 耗时20s

上面这种重试是requests自己封装好的,但是不太满足个性化的需求 于是有了第三种超时重试

3、直接就是死循环,重试多次,一直到成功:

def get_url2():

Headers = {

'content-type': 'application/json', 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64)AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.108 Safari/537.36'

}

url = "https://www.google.com.hk"

keep = True

while keep:

try:

res = requests.head(url=url, headers=Headers, timeout=5)

keep = False

except Exception as e:

print(datetime.datetime.now())

可以看到一直重试

死循环可不行啊,加个次数就好了,对上面代码改进下,有了第四种

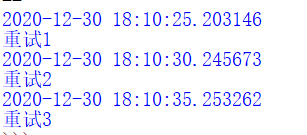

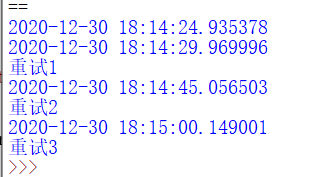

4、自定义重试次数

def get_url3():

Headers = {

'content-type': 'application/json', 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64)AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.108 Safari/537.36'

}

url = "https://www.google.com.hk"

keep = True

maxtimes = 3

count = 0

print(datetime.datetime.now())

while keep and count < maxtimes:

try:

res = requests.head(url=url, headers=Headers, timeout=5)

keep = False

except Exception as e:

print(datetime.datetime.now())

count = count + 1

print('重试' + str(count))

这时候有的人会问,这第四种和第二种有啥锤子区别???看看下面这个例子

5、自定义时间间隔。

某些情况下,爬虫的时候短时间内重试多次返回的东西是一样的,这时候就可以设置多少间隔后再重试。

def get_url4():

Headers = {

'content-type': 'application/json', 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64)AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.108 Safari/537.36'

}

url = "https://www.google.com.hk"

keep = True

maxtimes = 3

count = 0

print(datetime.datetime.now())

while keep and count < maxtimes:

try:

res = requests.get(url=url, headers=Headers, timeout=5)

keep = False

except Exception as e:

print(datetime.datetime.now())

# 延时10s后重试

time.sleep(10)

count = count + 1

print('重试' + str(count))

这样就可以控制每次重复请求的时间间隔,或者中间做点其他的东西。

原创文章,转载请注明出处:

https://jerrycoding.com/article/reqtimeout

《学习笔记》

0

微信

支付宝